Updating the firmware of UCS IO modules, Adapter, Bios, CIMC controller and FI are straight forward and you can do it from the UCS manager.

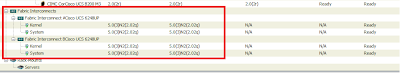

The firmware before upgrade is 2.0(2q). I'm upgrading to 2.0(3a).

1) The image below shows the old firmware.

Click on the equipment --Firmware Management--Installed firmware

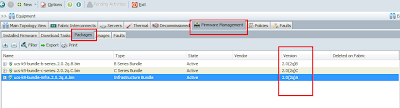

Click on the packages tab on the same folder to see what firmware packages are available on the Interconnect.

Look at the version field to see what version is available. Here I can see the existing version firmware bundle. I need to download the new version from the Cisco site and needs to upload it to the FI.

Since I have only B series servers and FI, I'm downloading B series bundle and Infra bundle.

You can also notice the state as active.

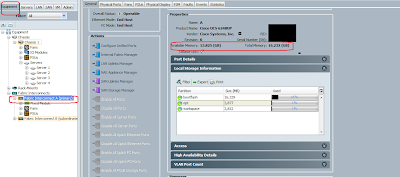

Now go ahead and download the bundle from the CISCO site (need authorised login) to the laptop and upload it to the FI. Before uploading you need to check enough space available on the FI.

Go to equipment--primary Fabric Interconnect--General tab.

Look for the Total Memory and available memory. Here we have enough memory to upload.

if you look at the botflash only 16% is used.

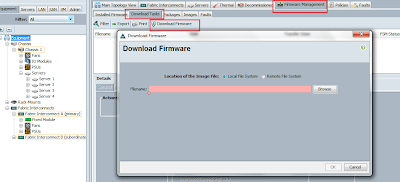

Click on the download task on the same page and click download Firmware, a download firmware window will appear.

Browse and choose the local file system , which choose the file from the local disk/laptop and start upload to Fabric Interconnect.

Once uploaded completely you can see the status on the general tab of the bottom pane.

On the same windows click on the update firmware tab

You will get the update firmware window.

On this window you can see the Running version, start-up version and backup version.

You can see a drop down list for backup version for each upgradable components.

Choose the latest version in the drop down list for each component and then click the apply button

you can see the status of the component changing from ready to scheduled,updating

You can see that the new version is on the running version and old version on the backup version.

Some cards needs reboot to reflect the current version. you may need a server reboot in that case.

and you can see the FI status as activating.

Well its very simple and the firmware is updated.

Thanks for reading

Jibby George

The firmware before upgrade is 2.0(2q). I'm upgrading to 2.0(3a).

1) The image below shows the old firmware.

Click on the equipment --Firmware Management--Installed firmware

Click on the packages tab on the same folder to see what firmware packages are available on the Interconnect.

Look at the version field to see what version is available. Here I can see the existing version firmware bundle. I need to download the new version from the Cisco site and needs to upload it to the FI.

Since I have only B series servers and FI, I'm downloading B series bundle and Infra bundle.

You can also notice the state as active.

Now go ahead and download the bundle from the CISCO site (need authorised login) to the laptop and upload it to the FI. Before uploading you need to check enough space available on the FI.

Go to equipment--primary Fabric Interconnect--General tab.

Look for the Total Memory and available memory. Here we have enough memory to upload.

if you look at the botflash only 16% is used.

Click on the download task on the same page and click download Firmware, a download firmware window will appear.

Browse and choose the local file system , which choose the file from the local disk/laptop and start upload to Fabric Interconnect.

Once uploaded completely you can see the status on the general tab of the bottom pane.

On the same windows click on the update firmware tab

You will get the update firmware window.

On this window you can see the Running version, start-up version and backup version.

You can see a drop down list for backup version for each upgradable components.

Choose the latest version in the drop down list for each component and then click the apply button

you can see the status of the component changing from ready to scheduled,updating

You can see that the new version is on the running version and old version on the backup version.

Some cards needs reboot to reflect the current version. you may need a server reboot in that case.

and you can see the FI status as activating.

Well its very simple and the firmware is updated.

Thanks for reading

Jibby George